TITLE: Semantic Object Parsing with Graph LSTM

AUTHER: Xiaodan Liang, Xiaohui Shen, Jiashi Feng, Liang Lin, Shuicheng Yan

ASSOCIATION: National University of Singapore, Sun Yat-sen University, Adobe Research

FROM: arXiv:1603.07063

CONTRIBUTIONS

- A novel Graph LSTM structure is proposed handle general graph-structured data, which effectively exploits global context by superpixels extracted by over-segmentation.

- A confidence-driven scheme is proposed to select the starting node and the order of updating sequences.

- In each Graph LSTM unit, different forget gates for the neighboring nodes are learned to dynamically incorporate the local contextual interactions in accordance with their semantic relations.

METHOD

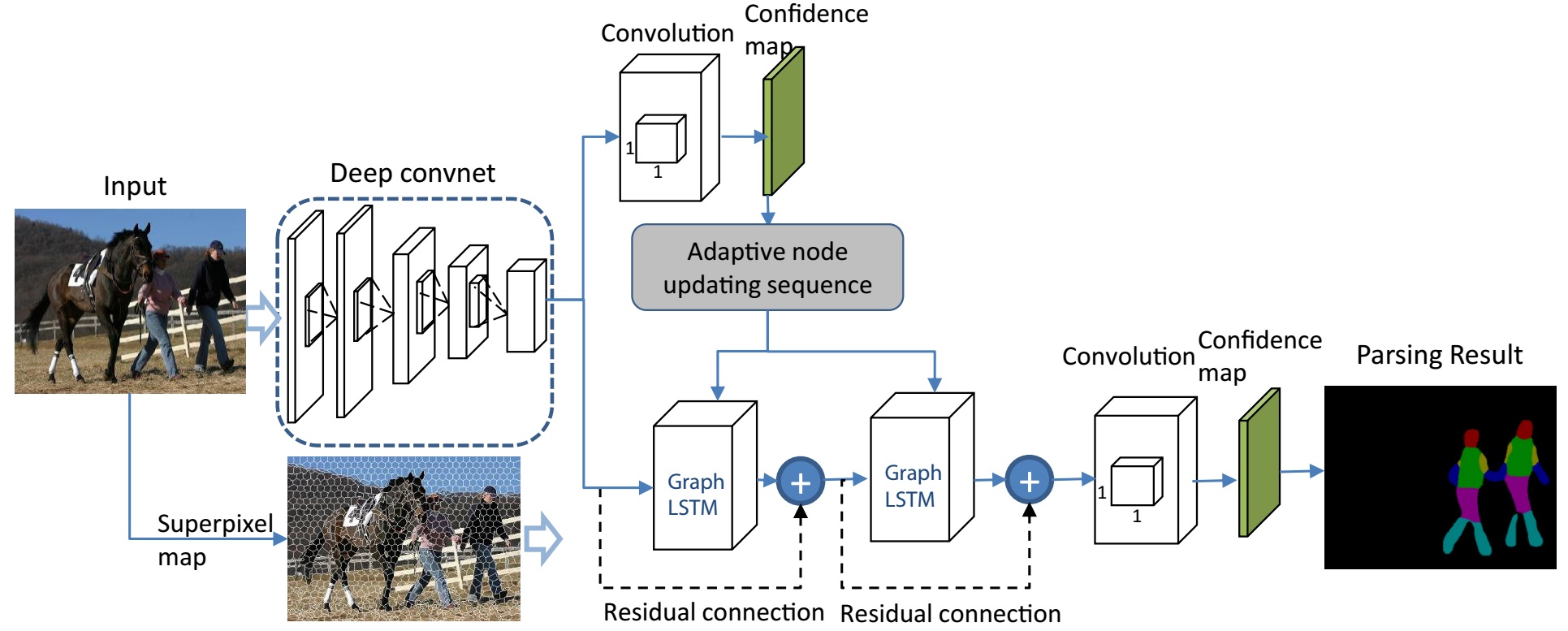

The main steps of the method is shown in the following figure.

- The input image first passes through a stack of convolutional layers to generate the convolutional feature maps.

- The convolutional feature maps are further used to generate an initial semantic confidence map for each pixel.

- The input image is over-segmented to multiple superpixels. For each superpixel, a feature vector is extracted from the upsampled convolutional feature maps.

- The first Graph LSTM takes the feature vector of every superpixel as input to compute a better state.

- The second Graph LSTM takes the feature vector of every superpixel and the output of first Graph LSTM as input.

- The update sequence of the superpixel is according to the initial confidence of the superpiexels.

- several 1×1 convolution filters are employed to produce the final parsing results.

some details

A graph structure is built based on the superpixels. The nodes are the superpixels and the nodes are linked when they are adjacent. The history information used by the G-LSTM for one superpixel come from the adjacent superpixels.

ADVANTAGES

- Constructed on superpixels generated by oversegmentation, the Graph LSTM is more naturally aligned with the visual patterns in the image.

- Adaptively learning the forget gates with respect to different neighboring nodes when updating the hidden states of a certain node is beneficial to model various neighbor connections.