Reading Note: Progressive Growing of GANs for Improved Quality, Stability, and Variation

TITLE: Progressive Growing of GANs for Improved Quality, Stability, and Variation

AUTHOR: Tero Karras, Timo Aila, Samuli Laine, Jaakko Lehtinen

ASSOCIATION: NVIDIA

FROM: ICLR2018

CONTRIBUTION

A training methodology is proposed for GANs which starts with low-resolution images, and then progressively increases the resolution by adding layers to the networks. This incremental nature allows the training to first discover large-scale structure of the image distribution and then shift attention to increasingly finer scale detail, instead of having to learn

all scales simultaneously.

METHOD

PROGRESSIVE GROWING OF GANS

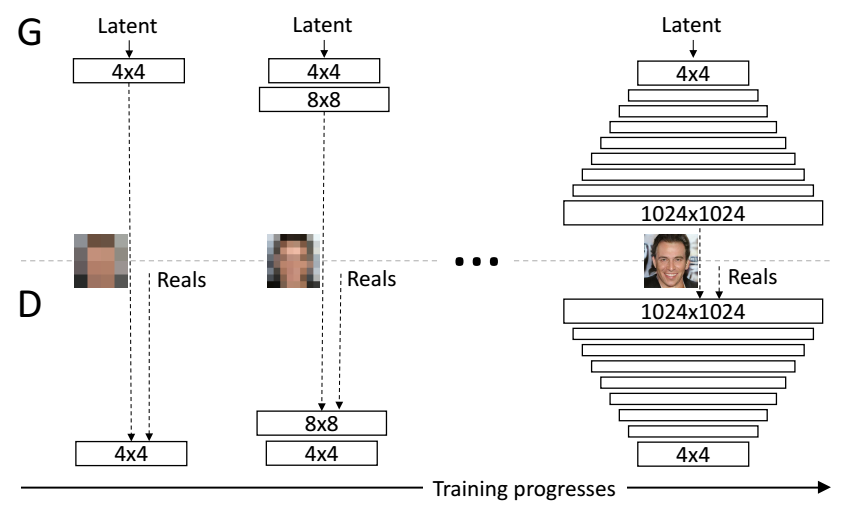

The following figure illustrates the training procedure of this work.

The training starts with both the generator $G$ and discriminator $D$ having a low spatial resolution of $4 \times 4$ pixels. As the training advances, successive layers are incrementally added to $G$ and $D$, thus increasing the spatial resolution of the generated images. All existing layers remain trainable throughout the process. Here $N \times N$ refers to convolutional layers operating on $N \times N$ spatial resolution. This allows stable synthesis in high resolutions and also speeds up training considerably.

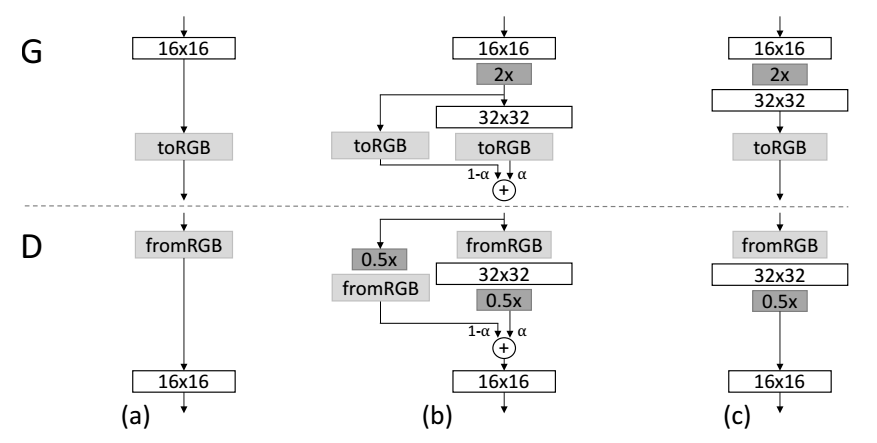

fade in is adopted when the new layers are added to double resolution of the generator $G$ and discriminator $D$ smoothly. This example illustrates the transition from $16 \times 16$ images (a) to $32 \times 32$ images (c). During the transition (b) the layers that operate on the higher resolution works like a residual block, whose weight $\alpha$ increases linearly from 0 to 1. Here 2x and 0.5x refer to doubling and halving the image resolution using nearest neighbor filtering and average pooling, respectively. The toRGB represents a layer that projects feature vectors to RGB colors and fromRGB does the reverse; both use $1 \times 1$ convolutions. When training the discriminator, the real images are downscaled to match the current resolution of the network. During a resolution transition, interpolation is carried out between two resolutions of the real images, similarly to how the generator output combines two resolutions.

INCREASING VARIATION USING MINIBATCH STANDARD DEVIATION

- Compute the standard deviation for each feature in each spatial location over the minibatch.

- Average these estimates over all features and spatial locations to arrive at a single value.

- Consturct one additional (constant) feature map by replicating the value and concatenate it to all spatial locations and over the minibatch

NORMALIZATION IN GENERATOR AND DISCRIMINATOR

EQUALIZED LEARNING RATE. A trivial $N (0; 1)$ initialization is used and then explicitly the weights are scaled at runtime. To be precise, $\hat{w}_i = w_i/c$, where $w_i$ are the weights and $c$ is the per-layer normalization constant from He’s initializer.The benefit of doing this dynamically instead of during initialization is somewhat subtle, and relates to the scale-invariance in commonly used adaptive stochastic gradient descent methods.

PIXELWISE FEATURE VECTOR NORMALIZATION IN GENERATOR. To disallow the scenario where the magnitudes in the generator and discriminator spiral out of control as a result of competition, the feature vector is normalized in each pixel to unit length in the generator after each convolutional layer, using a variant of “local response normalization”, configured as

$$ b_{x,y}=a_{x,y}/ \sqrt{\frac{1}{N} \sum_{j=0}^{N-1}(a_{x,y}^j)^2 + \epsilon} $$

where $\epsilon=10^{-8}$, $N$ is the number of feature maps, and $a_{x,y}$ is original feature vector, $b_{x,y}$ is the normalized feature vector in pixel $(x,y)$.

Reading Note: Be Your Own Prada: Fashion Synthesis with Structural Coherence

TITLE: Be Your Own Prada: Fashion Synthesis with Structural Coherence

AUTHOR: Shizhan Zhu, Sanja Fidler, Raquel Urtasun, Dahua Lin, Chen Change Loy

ASSOCIATION: The Chinese University of Hong Kong, University of Toronto, Vector Institute, Uber Advanced Technologies Group

FROM: ICCV2017

CONTRIBUTION

A method that can generate new outfits onto existing photos is developped so that it can

- retain the body shape and pose of the wearer,

- roduce regions and the associated textures that conform to the language description,

- Enforce coherent visibility of body parts.

METHOD

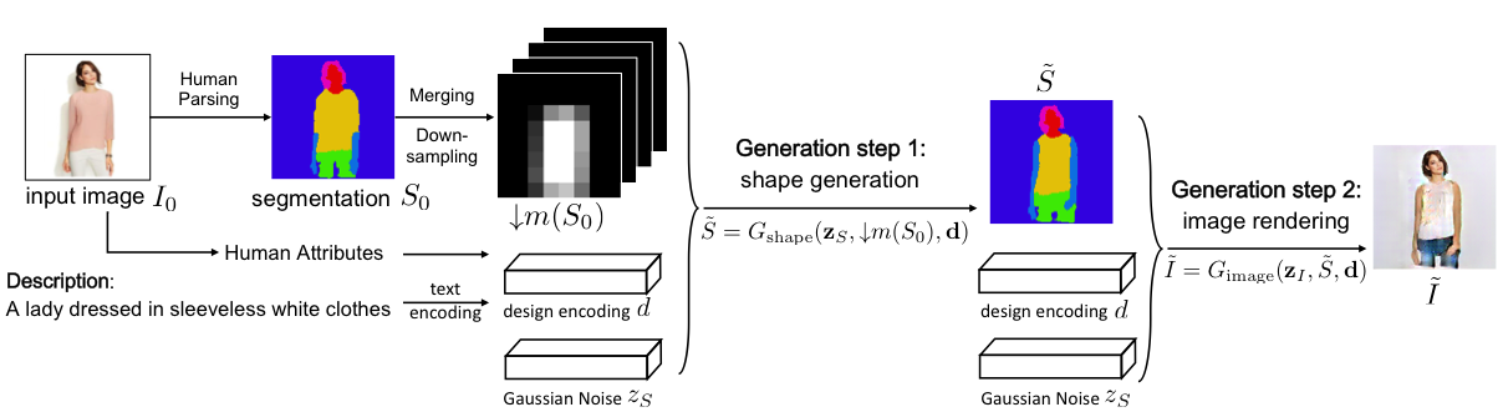

Given an input photograph of a person and a sentence description of a new desired outfit, the model first generates a segmentation map $\tilde{S}$ using the generator from the first GAN. Then the new image is rendered with another GAN, with the guidance from the segmentation map generated in the previous step. At test time, the final rendered image is obtained with a forward pass through the two GAN networks. The workflow of this work is shown in the following figure.

The first generator $G_{shape}$ aims to generate the desired semantic segmentation map $$\tilde{S}$$ by conditioning on the spatial constraint $$\downarrow m(S_0)$$, the design coding $$\textbf{d}$$, and the Gaussian noise $$\textbf{z}{S}$$. $$S{0}$$ is the original pixel-wise one-hot segmentation map of the input image with height of $$m$$, width of $n$ and channel of $L$, which represents the number of labels. $\downarrow m(S_0)$ downsamples and merges $S_{0}$ so that it is agnostic of the clothing worn in the original image, and only captures information about the user’s body. Thus $G_{shape}$ can generate a segmentation map $\tilde{S}$ with sleeves from a segmentation map $S_{0}$ without sleeves.

The second generator $G_{image}$ renders the final image $\tilde{I}$ based on the generated segmentation map $\tilde{S}$, design coding $\textbf{d}$, and the Gaussian noise $\textbf{z}_I$.

Reading Note: Detect to Track and Track to Detect

TITLE: Detect to Track and Track to Detect

AUTHOR: Christoph Feichtenhofer, Axel Pinz, Andrew Zisserman

ASSOCIATION: Graz University of Technology, University of Oxford

FROM: arXiv:1710.03958

CONTRIBUTION

- A ConvNet architecture is set up for simultaneous detection and tracking, using a multi-task objective for frame-based object detection and across-frame track regression.

- Correlation features that represent object co-occurrences across time are introduced to aid the ConvNet during tracking.

- Frame-level detections are linked to produce high accuracy detections at the video-level based on across-frame tracklets.

METHOD

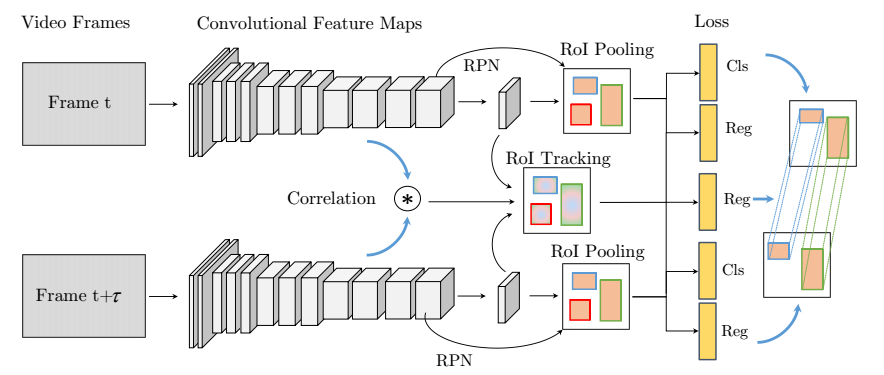

For frame-level detections, this work adopts R-FCN as the base framework to detect objects in a single frame. The inter-frame correlation features are extracted from the feature maps of the two frames. A multi-task loss of localization, classification and displacement is used to train the net work. The workflow of this work is shown in the following figure.

The key innovation of this work is an operation denoted as ROI tracking. The input of this operation is the bounding box regression features of the two frames $$\textbf{x}{reg}^{t}$$, $$\textbf{x}{reg}^{t+\tau}$$ and the correlation features $$\textbf{x}^{t,t+\tau}{corr}$$, which are concatenated. The correlation layer performs point-wise feature comparison of two feature maps $$\textbf{x}^{t}{l}$$, $$\textbf{x}^{t+\tau}_{l}$$

$$ \textbf{x}{corr}^{t,t+\tau} (i,j,p,q) = \langle \textbf{x}{l}^{t} (i,j), \textbf{x}_{l}^{t+\tau} (i+p,j+q) \rangle $$

where $-d \leq p \leq d$ and $-d \leq q \leq d$ are offsets to compare features in a square neighbourhood around the locations $i$, $j$ in the feature map, defined by the maximum displacement $d$.

The loss function is written as

$$ Loss({p_i},{b_i},{\Delta_i} ) = \frac{1}{N} \sum_{i=1}^{N}L_{cls}(p_i,c^{}) + \lambda \frac{1}{N_{fg}} \sum_{i=1}^{N} [c_i^>0] L_{reg}(b_i, b_i^) + \lambda \frac{1}{N_{tra}} \sum_{i=1}^{N_{tra}} L_{tra}(\Delta_i^{t+\tau}, \Delta_i^{,t+\tau}) $$

A class-wise linking score is defined to combine detections and tracks across time

$$ s_{c}(D_{i,c}^t,D_{j,c}^{t+\tau},T^{t,t+\tau})=p_{i,c}^t+p_{j,c}^{t+\tau}+\phi(D_{i}^{t},D_{j},T^{t,t+\tau}) $$

where the pairwise term $\phi$ evaluates to 1 if the IoU overlap a track correspondences $T^{t,t+\tau}$ with the detection boxes $D_{i}^{t}$, $D_{i}^{t+\tau}$ is larger than 0.5. $p_{i,c}^{t}$, $p_{j,c}^{t+\tau}$ is the softmax probability for class $c$. The optimal path across a video can be found by maximizing the scores over the duration $T$ of the video. Once the optimal tube is found, the detections corresponding to that tube are removed. Then reweight the detection scores in the tube by adding the mean of the 50% highest scores in that tube. And the procedure is applied again to the remaining detections.

Reading Note: Interpretable Convolutional Neural Networks

TITLE: Interpretable Convolutional Neural Networks

AUTHOR: Quanshi Zhang, Ying Nian Wu, Song-Chun Zhu

ASSOCIATION: UCLA

FROM: arXiv:1710.00935

CONTRIBUTION

- Slightly revised CNNs are propsed to improve their interpretability, which can be broadly applied to CNNs with different network structures.

- No annotations of object parts and/or textures are needed to ensure each high-layer filter to have a certain semantic meaning. Each filter automatically learns a meaningful object-part representation without any additional human supervision.

- When a traditional CNN is modified to an interpretable CNN, experimental settings need not to be changed for learning. I.e. the interpretable CNN does not change the previous loss function on the top layer and uses exactly the same training samples.

- The design for interpretability may decrease the discriminative power of the network a bit, but such a decrease is limited within a small range.

METHOD

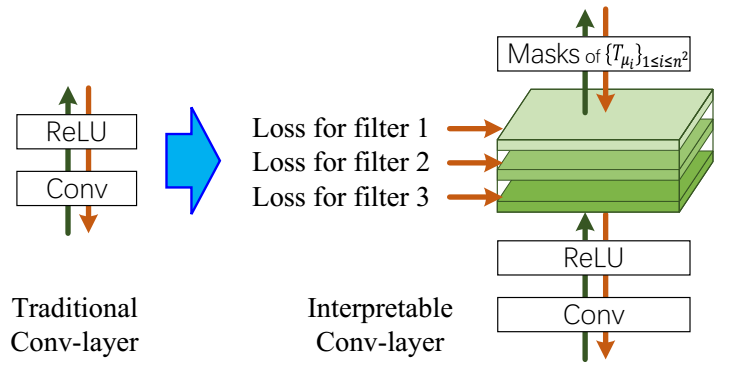

The loss for filter is illustrated in the following figure.

A feature map is expected to be strongly activated in images of a certain category and keep silent on other images. Therefore, a number of templates are used to evaluate the fitness between the current feature map and the ideal distribution of activations w.r.t. its semantics. The template is an ideal distribution of activations according to space locations. The loss for layers is formulated as the mutual information between feature map $\textbf{X}$ and templates $\textbf{T}$.

$$ Loss_{f} = - MI(\textbf{X}; \textbf{T}) $$

the loss can be re-written as

$$ Loss_{f} = - H(\textbf{T}) + H(\textbf{T’}={T^{-}, \textbf{T}^{+}|\textbf{X}})+\sum_{x}p(\textbf{T}^{+},x)H(\textbf{T}^{+}|X=x) $$

The first term is a constant denoting the piror entropy of $\textbf{T}^{+}$. The second term encourages a low conditional entropy of inter-category activations which means that a well-learned filter needs to be exclusively activated by a certain category and keep silent on other categories. The third term encorages a low conditional entropy of spatial distribution of activations. A well-learned filter should only be activated by a single region of the feature map, instead of repetitively appearing at different locations.

SOME THOUGHTS

This loss can reduce the redundancy among filters, which may be used to compress the model.

Winsock and Websocket video transmitting based on OpenCV

This repo implemented transmitting cv::Mat via winsock to front-end and display the image using websocket.

Server Code

The server code is implemented in websocket_server_c, which is written in C++ and based on winsock2 on Windows. The server code first construct handshakes and a connection with the client based on TCP protocal. As long as the connection being set up, a video is transmitted to the front-end frame by frame. The frames are extracted using OpenCV. And the frames are encoded in JPEG format first and then encoded to string using base64.

Note that, many examples of socket sending messages ignored the steps of construct connectiong for websocket, which is implemented in this repo.

Clinet Code

The clinet code is a django project in websocket_client_django. The only function is to receive the messages from server end and display the frames on web.

Notice

This code is just a demo for using socket in C++ and web. THere must be better way for live video streaming.

Reading Note: Pyramid Scene Parsing Network

TITLE: Pyramid Scene Parsing Network

AUTHOR: Hengshuang Zhao, Jianping Shi, Xiaojuan Qi, Xiaogang Wang, Jiaya Jia

ASSOCIATION: Chinese University of Hongkong, SenseTime

FROM: arXiv:1612.01105

CONTRIBUTIONS

- A pyramid scene parsing network is proposed to embed difficult scenery context features in an FCN based pixel prediction framework.

- An effective optimization strategy is developped for deep ResNet based on deeply supervised loss.

- A practical system is built for state-of-the-art scene parsing and semantic segmentation where all crucial implementation details are included.

METHOD

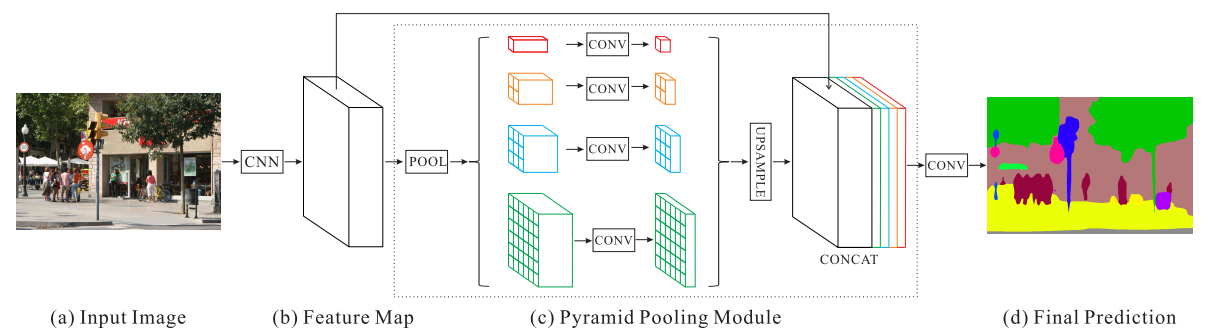

The framework of PSPNet (Pyramid Scene Parsing Network) is illustrated in the following figure.

Important Observations

There are mainly three observations that motivated the authors to propose pyramid pooling module as the effective global context prior.

- Mismatched Relationship Context relationship is universal and important especially for complex scene understanding. There exist co-occurrent visual patterns. For example, an airplane is likely to be in runway or fly in sky while not over a road.

- Confusion Categories Similar or confusion categories should be excluded so that the whole object is covered by sole label, but not multiple labes. This problem can be remedied by utilizing the relationship between categories.

- Inconspicuous Classes To improve performance for remarkably small or large objects, one should pay much attention to different sub-regions that contain inconspicuous-category stuff.

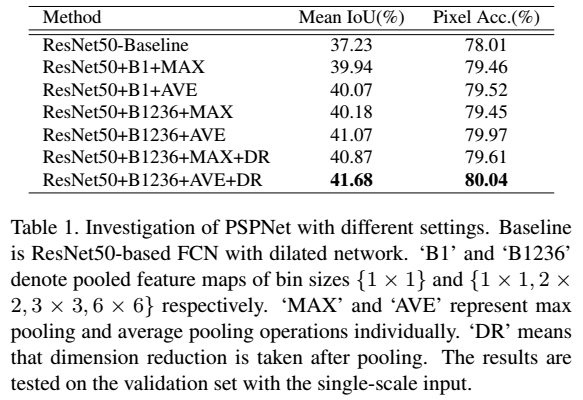

Pyramid Pooling Module

The pyramid pooling module fuses features under four different pyramid scales. The coarsest level highlighted in red is global pooling to generate a single bin output. The following pyramid level separates the feature map into different sub-regions and forms pooled representation for different locations. The output of different levels in the pyramid pooling module contains the feature map with varied sizes. To maintain the weight of global feature, 1×1 convolution layer is used after each pyramid level to reduce the dimension of context representation to 1/N of the original one if the level size of pyramid is N. Then the low-dimension feature maps are directly upsampled to get the same size feature as the original feature map via bilinear interpolation. Finally, different levels of features are concatenated as the final pyramid pooling global feature.

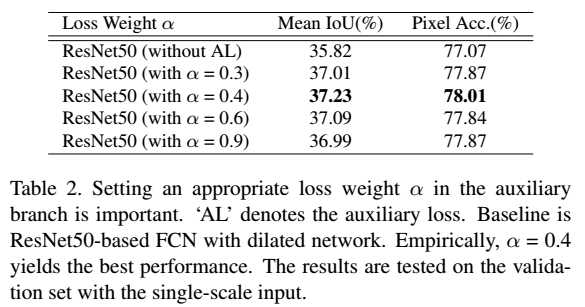

Deep Supervision for ResNet-Based FCN

Apart from the main branch using softmax loss to train the final classifier, another classifier is applied after the fourth stage. The auxiliary loss helps optimize the learning process, while the master branch loss takes the most responsibility.

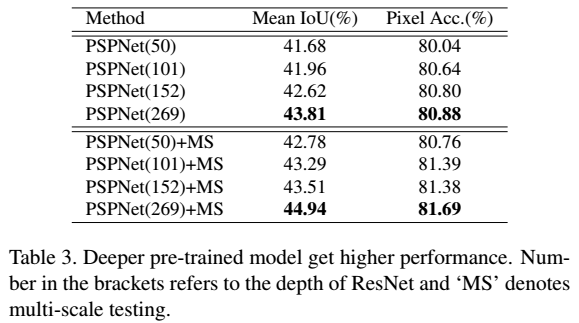

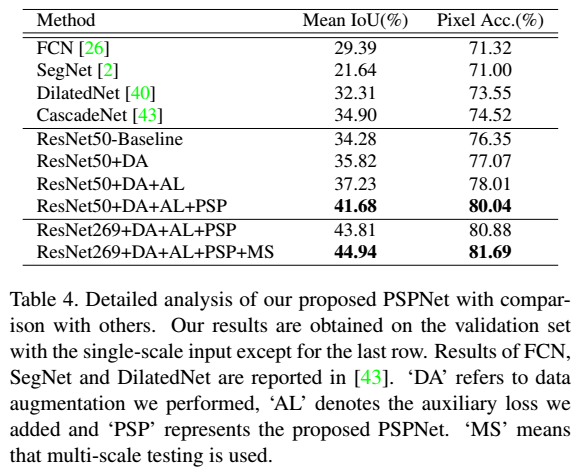

Ablation Study

Win10开启Linux Bash

微软在推送的Win10一周年更新预览版14316中,该版本中包含了大部分已宣布内容,其中包括了一项重要的原生支持Linux Bash命令行支持。即用户现在即使不使用Linux系统或Mac电脑就可以在Win10上使用Bash,那么如何在Win10系统上开启Linux Bash命令行呢?大家可以尝试下面的方法来解决这个问题。

- 首先需要用户将Win10系统升级到Build 14316版本。系统设置——更新和安全——针对开发人员——选择开发者模式。

- 搜索“程序和功能”,选择“开启或关闭Windows功能”,开启Windows Subsystem for Linux (Beta),并重启系统。

- 安装Bash,需要开启命令行模式,然后输入

bash,即可使用。 - 系统目录在

C:\Users\a\AppData\Local\lxss\home\tztx\CODE\caffe-online-demo下。

Windows 10 x64安装Electron

安装Node.js

从官网下载适合自己系统的安装包并安装。安装完成house可以使用npm -v命令查看node.js版本号,确认其是否正常安装。

使用node -v命令检查node.js版本,确认node安装;

安装cnpm工具

从官方npm下载速度较慢,可以使用淘宝定制的命令行工具cnpm代替默认的npm。安装命令为npm install -g cnpm --registry=https://registry.npm.taobao.org

安装Electron

安装命令为cnpm install -g electron。

《Dunkirk》

刚刚看了《敦刻尔克》。最让我伤心的是一个飞行员的故事。他们一共三个人去执行任务,其中一个被击落了直接牺牲了,另一个中通被击落,但是在海上迫降被救了。最后一个到了敦刻尔克海滩,他的燃油不够了,但是还是选择继续战斗,最后油料耗尽,在滑行的状态下,螺旋桨都不转了,还击落了一架敌机,然后他在海滩上滑行降落,我以为他会被救走呢,但是居然是被俘了。感觉其他人都被拯救了,但是只有他被抛弃了。